Why Sample Evaluation Creates False Confidence in Corporate Eco-Tableware Gift Type Selection Across UAE Programmes

There is a specific moment in the corporate gift procurement cycle where confidence peaks and accuracy collapses simultaneously. It happens when the evaluation committee holds a finished sample in their hands — a beautifully crafted piece of eco-tableware, perhaps a bamboo and stainless steel cutlery set in a magnetic-close presentation box, or a palm leaf dinnerware collection with laser-engraved corporate branding — and collectively agrees that this is the gift type that will represent the organisation. The sample looks exceptional. The finish is flawless. The branding is crisp. The packaging opens with satisfying precision. Every person in the room is evaluating the gift type based on this physical object, and every person in the room is making the same systematic error: they are treating the sample as a representative unit of what will be delivered at scale, when in reality the sample represents the absolute ceiling of what the production process can achieve under ideal, non-scalable conditions.

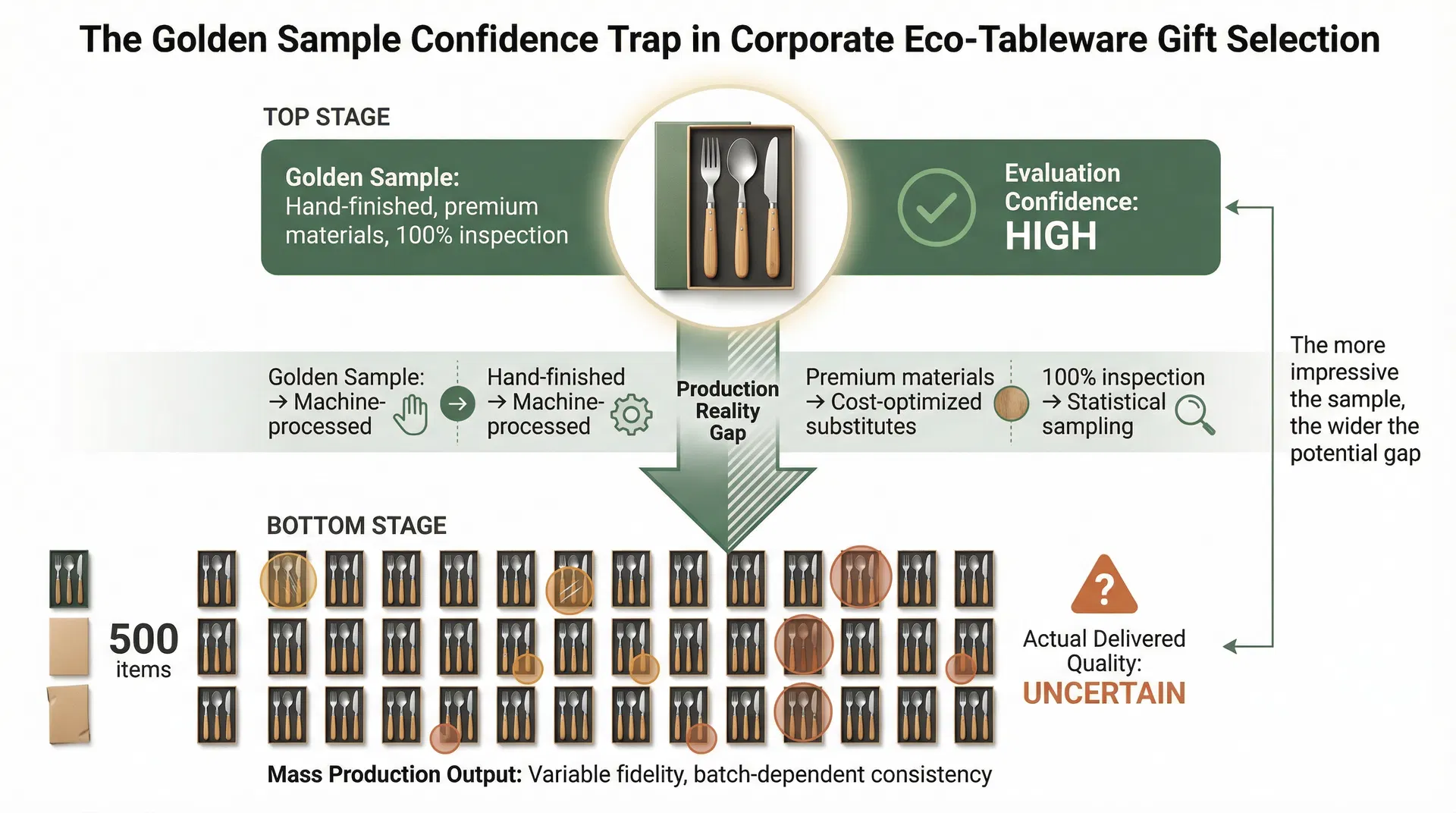

This is not a quality control failure. It is an evaluation methodology failure. The sample approval process, as it operates in most UAE corporate procurement workflows, is structurally designed to produce overconfidence in the selected gift type. A sample is produced slowly, often by hand, by the most experienced technicians on the production floor. It receives individual attention at every stage — the material selection is deliberate, the finishing is meticulous, the assembly is inspected at each step, and the final piece undergoes what amounts to 100% quality verification. This is appropriate for its purpose: the sample exists to demonstrate what the production process is capable of producing. But capability under ideal conditions and consistency under production conditions are fundamentally different things, and the gap between them varies dramatically depending on the complexity of the gift type being evaluated.

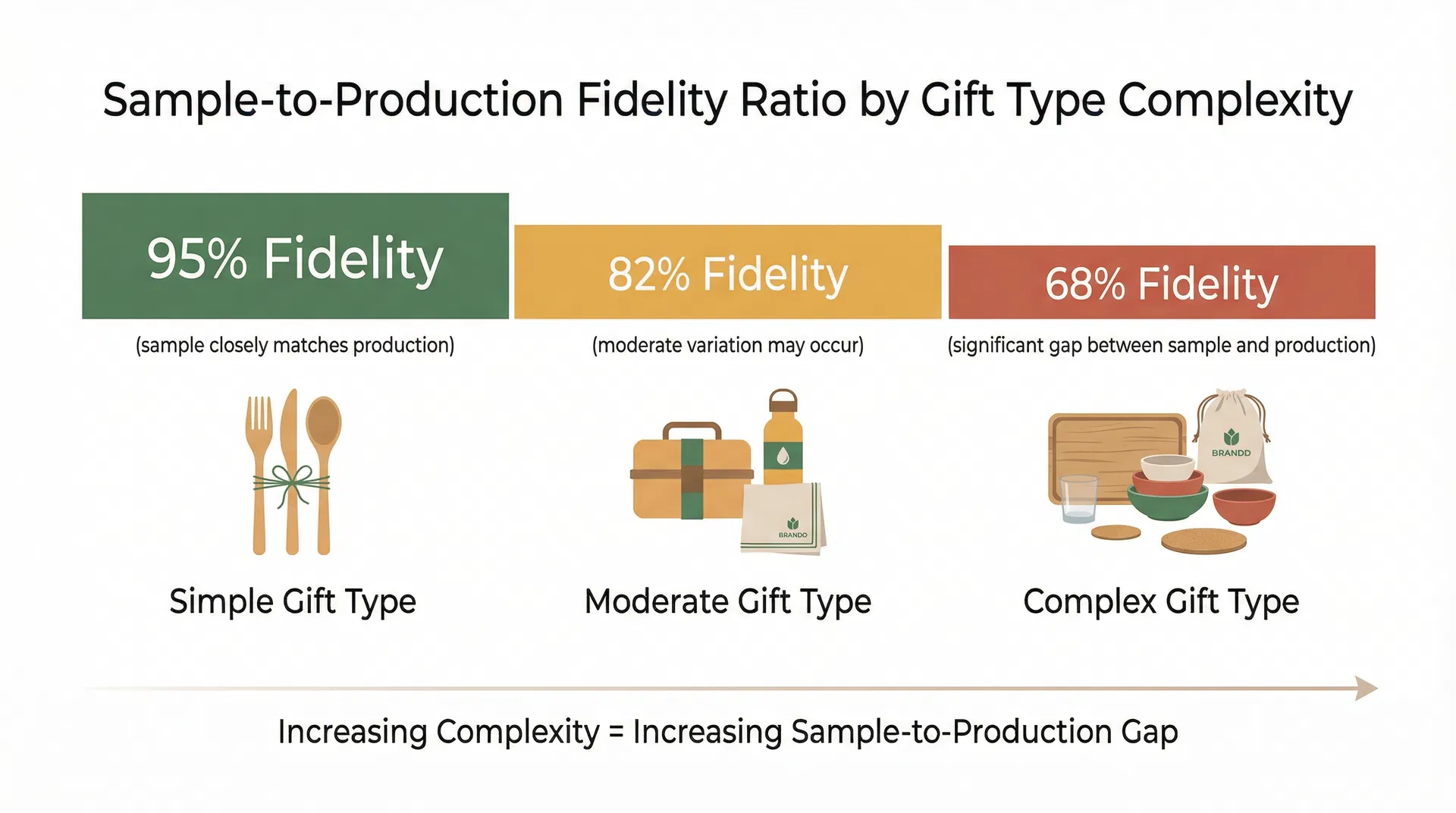

In practice, this is often where corporate gift type decisions start to be misjudged — not because the wrong category was chosen, but because the evaluation process itself created a distorted picture of what each category would actually look like when delivered in quantities of 500 or 2,000 or 5,000 units. A single-material item like a set of bamboo chopsticks with pad-printed branding has what we might call a high sample-to-production fidelity ratio. The sample and the production unit are nearly identical because the manufacturing process is simple, the variables are few, and the quality control checkpoints are straightforward. The bamboo is cut, shaped, sanded, printed, and packed. Each step is mechanically consistent. The sample you approve is, with minor tolerance variations, what you will receive. But a multi-material presentation set — say, a bamboo-handled stainless steel cutlery collection with individually engraved pieces, a wheat straw bowl with colour-matched corporate branding, and a custom-moulded recycled PET tray inside a rigid box with foil-stamped Arabic and English text — has a dramatically lower fidelity ratio. The sample may be stunning. The production run will be different.

The difference is not random. It is structural. Every additional material in a gift set introduces a new variable that must be controlled across the entire production run. Bamboo grain patterns vary between batches. Stainless steel surface finishes shift with polishing wheel wear. Colour matching on wheat straw composites is sensitive to the ratio of straw fibre to binding agent, which fluctuates with raw material sourcing. Laser engraving depth depends on machine calibration that drifts over extended runs. Foil stamping adhesion on rigid box surfaces is affected by ambient humidity during the stamping process. Each of these variables is manageable in isolation — and each is perfectly managed when producing a single sample. But in a production run of 2,000 units, these variables interact. The bamboo grain variation that was invisible on one sample becomes a visible inconsistency across a batch. The engraving depth that was perfect on the sample unit shifts slightly as the laser head accumulates heat over hundreds of consecutive operations. The foil stamping that adhered flawlessly to the sample box shows micro-lifting on units produced during the afternoon shift when the factory floor humidity rises.

The evaluation committee that approved the sample did not see any of this. They could not have seen it, because the sample, by definition, was produced under conditions that do not exist during mass production. And here is the critical misjudgment: the committee used the sample to evaluate the gift type, not just the specific item. They concluded that a multi-material presentation set is the appropriate gift type for their programme because the sample demonstrated that a multi-material presentation set can look exceptional. What they did not evaluate — and what the standard sample approval process does not require them to evaluate — is whether a multi-material presentation set can look consistently acceptable across the full production quantity. The gift type decision was made on the basis of peak performance, not sustainable performance.

From a compliance and quality assurance perspective, this creates a specific category of risk that is poorly understood by most procurement teams. The risk is not that the production run will be defective — it is that the production run will be adequate but noticeably different from the approved sample, and that this difference will be most pronounced in exactly the gift types that looked most impressive during the evaluation stage. The more complex the gift type, the more impressive the sample, and the wider the gap between sample quality and production reality. This is counterintuitive for procurement teams who naturally assume that a more impressive sample indicates a more capable production process. In fact, it often indicates the opposite: a production process that is capable of extraordinary output under controlled conditions but that faces greater variability challenges at scale.

We see this pattern repeatedly in the UAE corporate gifting market, where the cultural expectation for presentation quality is exceptionally high and where gift programmes frequently serve protocol functions that demand consistency across every unit. A government-facing sustainability initiative that distributes 800 eco-tableware gift sets to delegates at a conference cannot afford visible quality variation between units. If delegate number 1 receives a set with perfectly aligned engraving and flawless foil stamping, and delegate number 400 receives a set with slightly off-centre engraving and micro-lifted foil edges, the programme has failed its representational purpose — even though both units would pass a standard quality inspection. The sample approval process did not account for this because the sample, being a single unit, could not demonstrate inter-unit variation. The evaluation committee approved a gift type based on a data point of one.

The practical consequence is that organisations frequently select gift types that are more complex than their programme can reliably deliver at the required quality level. A simpler gift type — one with fewer materials, fewer finishing processes, and fewer assembly steps — would have produced a less dramatic sample but a more consistent production run. The bamboo cutlery set with a single-colour screen print in a kraft sleeve may not generate the same reaction in the evaluation room as the multi-material presentation set, but it will generate a consistent reaction from every recipient because the gap between sample and production is negligible. The evaluation process, however, is biased toward the dramatic sample because the committee is implicitly comparing gift types on the basis of their best possible expression, not their most likely production expression.

This bias is compounded by the timing of the sample evaluation within the procurement cycle. By the time samples arrive, the organisation has typically already narrowed its options to two or three gift types, invested time in briefing the supplier, and built internal expectations around the programme. The sample evaluation is not a neutral assessment — it is a confirmation exercise. The committee wants to approve a sample because approval means the programme moves forward. Rejection means delay, re-briefing, and potentially revisiting the gift type decision entirely. This creates what quality professionals recognise as confirmation bias in the approval process: the committee evaluates the sample looking for reasons to approve rather than reasons to question. Minor imperfections that would be visible in a production context are overlooked because the single sample, held under favourable lighting in a conference room, presents its best face. The committee's confidence in the gift type is highest at exactly the moment when their information about production reality is lowest.

There is a further dimension to this that relates specifically to how eco-tableware materials behave differently from conventional corporate gift materials. Natural materials — bamboo, palm leaf, wheat straw, sugarcane bagasse — have inherent variability that synthetic materials do not. Two sheets of acrylic cut from the same batch will be virtually identical. Two pieces of bamboo cut from the same culm will have different grain patterns, different density gradients, and different surface characteristics. This natural variability is part of the material's appeal in an eco-gifting context — it communicates authenticity and sustainability. But it also means that the sample-to-production fidelity ratio for eco-tableware is structurally lower than for conventional corporate gifts made from uniform synthetic materials. The sample bamboo piece was selected, consciously or unconsciously, for its attractive grain pattern and consistent colouring. The production run will include the full natural range of grain patterns and colour variations. The gift type that was selected partly because of its natural, authentic appearance will, at production scale, display more variation than the committee anticipated — not because of any manufacturing failure, but because natural materials are inherently variable and the sample concealed this variability by presenting a single, curated specimen.

The correction for this systematic overconfidence is not to abandon sample evaluation — samples remain essential for verifying design intent, material quality, and branding execution. The correction is to change what the sample evaluation is understood to represent. Rather than treating the sample as a preview of the delivered product, procurement teams should treat it as a demonstration of the production ceiling — the best possible output under ideal conditions. The relevant question is not "does this sample meet our standards?" but "what percentage of the production run will meet this standard, and is that percentage acceptable for our programme's requirements?" For a simple, single-material gift type, the answer may be 95% or higher. For a complex, multi-material presentation set, the answer may be 75% or lower. Both answers are honest. But they lead to very different gift type decisions, and the standard sample approval process does not require the question to be asked.

Organisations that navigate this effectively tend to request not one sample but a small pre-production batch — typically five to ten units produced under conditions that approximate, though cannot fully replicate, the production environment. A pre-production batch reveals inter-unit variation that a single sample cannot. It shows the range of acceptable output, not just the peak. It allows the evaluation committee to assess whether the gift type they have selected can deliver consistent quality across the full programme, or whether the impressive sample was an outlier that the production process will not reliably reproduce. This is a more expensive and time-consuming evaluation method, but for programmes where consistency is critical — government protocol gifts, C-suite client appreciation, high-visibility sustainability showcases — the cost of discovering production variability after delivery is substantially higher than the cost of discovering it during evaluation.

The deeper issue, and the one that connects this evaluation methodology problem to the broader question of how different gift types serve different business needs, is that the sample confidence trap systematically pushes organisations toward more complex gift types than their programmes require or their production partners can consistently deliver. The evaluation process rewards complexity because complexity produces impressive samples. But the programme's success depends on consistency, and consistency favours simplicity. The gift type that best serves the business need is often not the one that produced the most impressive sample — it is the one whose sample most accurately represents what every recipient will actually receive. Until the evaluation methodology accounts for the fidelity ratio between sample and production, organisations will continue to select gift types based on ceiling performance and be disappointed by floor performance, a pattern that repeats across procurement cycles because the sample, each time, renews the same false confidence that the previous production run should have dispelled.

Emirates Eco Tableware

Specialist supplier of branded eco-friendly cutlery for UAE corporate and hospitality markets